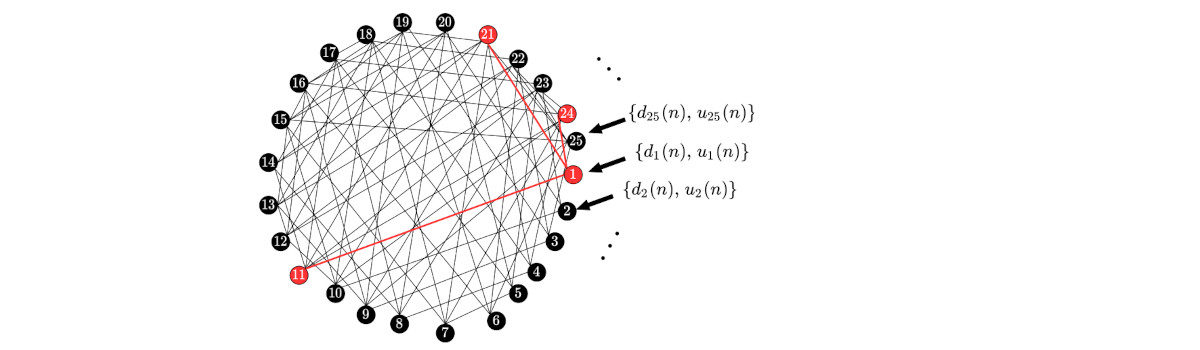

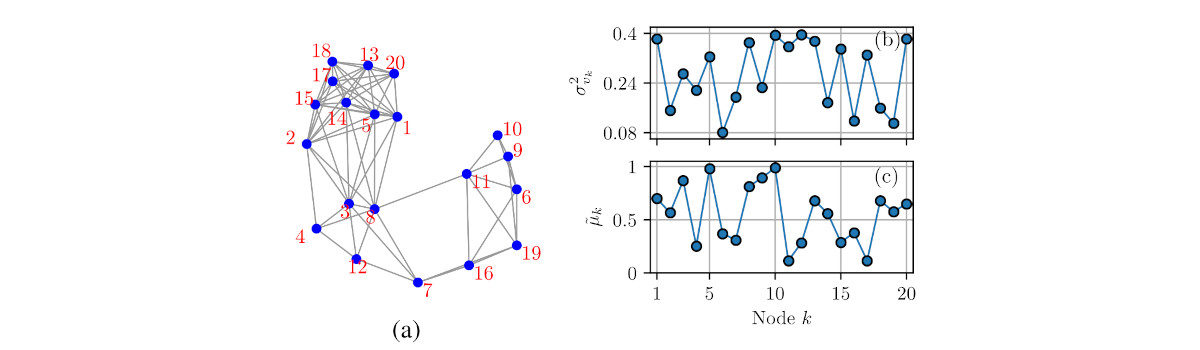

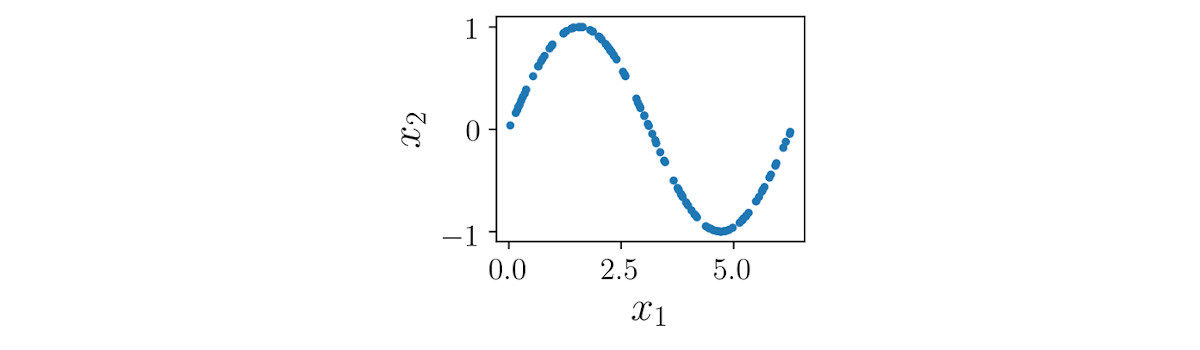

Recently, we proposed a sampling algorithm for diffusion networks that locally adapts the number of nodes sampled according to the estimation error. In this paper, we extend the results, proposing some improvements to the algorithm.

Papers published in SBrT 2021

Simpósio Brasileiro de Telecomunicações (SBrT) is the most important brazilian symposium on telecommunications and signal processing. In the 2021 edition, that happened from September 26th to 29th, we had 4 papers published:

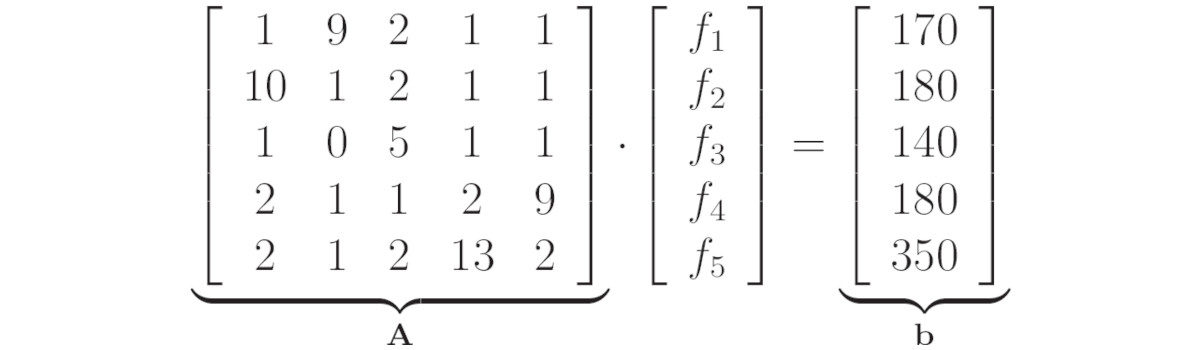

Working With Linear Systems in Python With scipy.linalg

New paper: A Sampling Algorithm for Diffusion Networks

Conference paper presented on the 28th European Signal Processing Conference (EUSIPCO) in which we propose an adaptive sampling method for the diffusion networks.

New paper: A Low-Cost Algorithm for Adaptive Sampling and Censoring in Diffusion Networks

This paper summarizes the results obtained by Daniel G. Tiglea during the period he was working to obtain the M.S. Degree.

7 Python Code Examples for Everyday Use

On September 27, 2020 the article 7 Python Code Examples for Everyday Use was published on Go Skills. In the following, you’ll find the summary and the link to the code on Github.

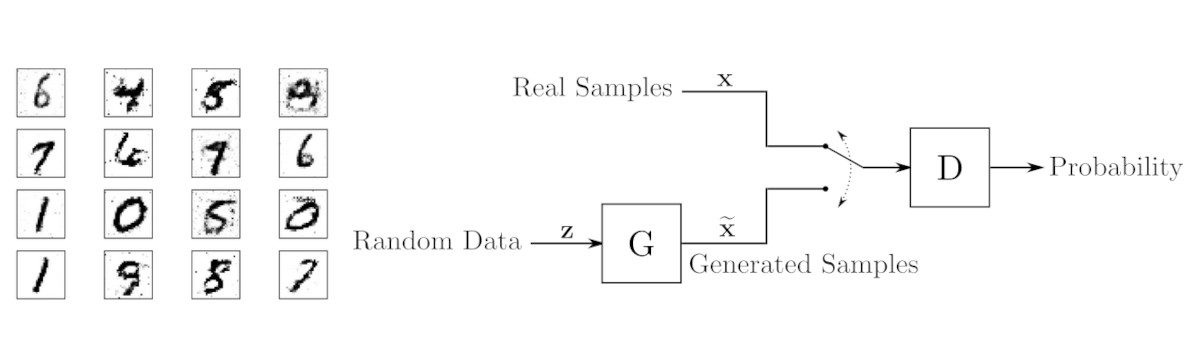

Generative Adversarial Networks: Build Your First Models

How To Set Up a PageKite Front-End Server on Debian 9

On October 25, 2019 the article How To Set Up a PageKite Front-End Server on Debian 9 was published on Digital Ocean. In the following, you’ll find the summary and the link to the article on the Digital Ocean website.

Arduino With Python: How to Get Started

On October 21, 2019 the article Arduino With Python: How to Get Started was published on Real Python. In the following, you’ll find the summary and the link to the article on the Real Python website.

A Brief Introduction to GANs – SciPy Meetup Talk

On the 15th of August, I presented a talk on SciPy Meetup – Coders Hub Powered by Giant Steps. Here are the summary of the presentation, slides and code (in portuguese).